Before going into additional details about what a Jupyter Notebook is, I would like first to have a visual comparison of the analytical functionality offered by Azure Kusto Query Language queries vs. Jupyter Notebook with Python/msticpy/Kqlmagic.

If your analytical requirements are limited to those typical to SIEM platforms that allow query of data, extraction of stats for various fields, building some correlation rules and visualizing reports, then the KQL queries are probably enough, in the same way that if you need to screw in 100 bolts, a power drill is the right tool and you don’t need anything else. However, if you wish to not just extract the data but also manipulate it with libraries available through popular programming tools like Python, C#, R, etc., with a wide range of possibilities to import, enrich, process and visualize datasets, then an environment like Jupyter Notebooks is the right tool. Yet, like in the picture above, you need to know how to use these extra tools and to understand which one is right for the job.

What is a Jupyter Notebook? The Jupyter Notebook is a programming tool for interactively developing and presenting data science projects. A notebook is divided in sections (aka “cells”). A cell can combine the programming code and its output, visualisations, comments, annotations, mathematical equations, and other rich media. The user can go back and forth between different cells, re-run them individually and adjust them on the fly. This intuitive workflow promotes iterative and rapid development, making notebooks an increasingly popular choice for data science and analysis. As an open source (Project Jupyter), the notebooks are completely free and are increasingly adopted by many analytical platforms. The Jupyter Notebooks support over 40 programming languages, including the popular Python, C# and R.

So, if Jupyter Notebooks are so great, what is catch? The catch is that you need to have a certain skill level in using one of those 40 programming languages. Some programming libraries are only available for specific languages so if you need them you need to learn one of them. However, one of most common languages is Python, a programming language that is relatively easy to learn and there are practically unlimited training and support options on the Internet for any kind of Python-related task. It is also one of the languages for which there are dedicated libraries allowing the use of Kusto query syntax — Kqlmagic and msticpy (pronounced “mystic pie”) a set of security-related libraries that can enrich the data extracted by Kqlmagic.

Jupyter Notebooks can run on a local computer (and they will use the local processing power) or can be hosted on websites or cloud platforms such as Azure and use the processing power available from the provider.

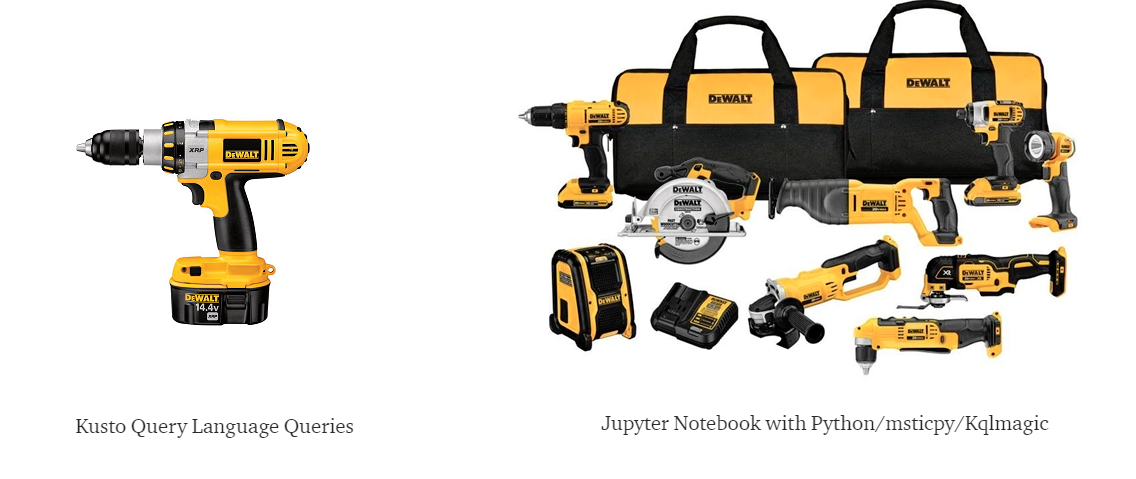

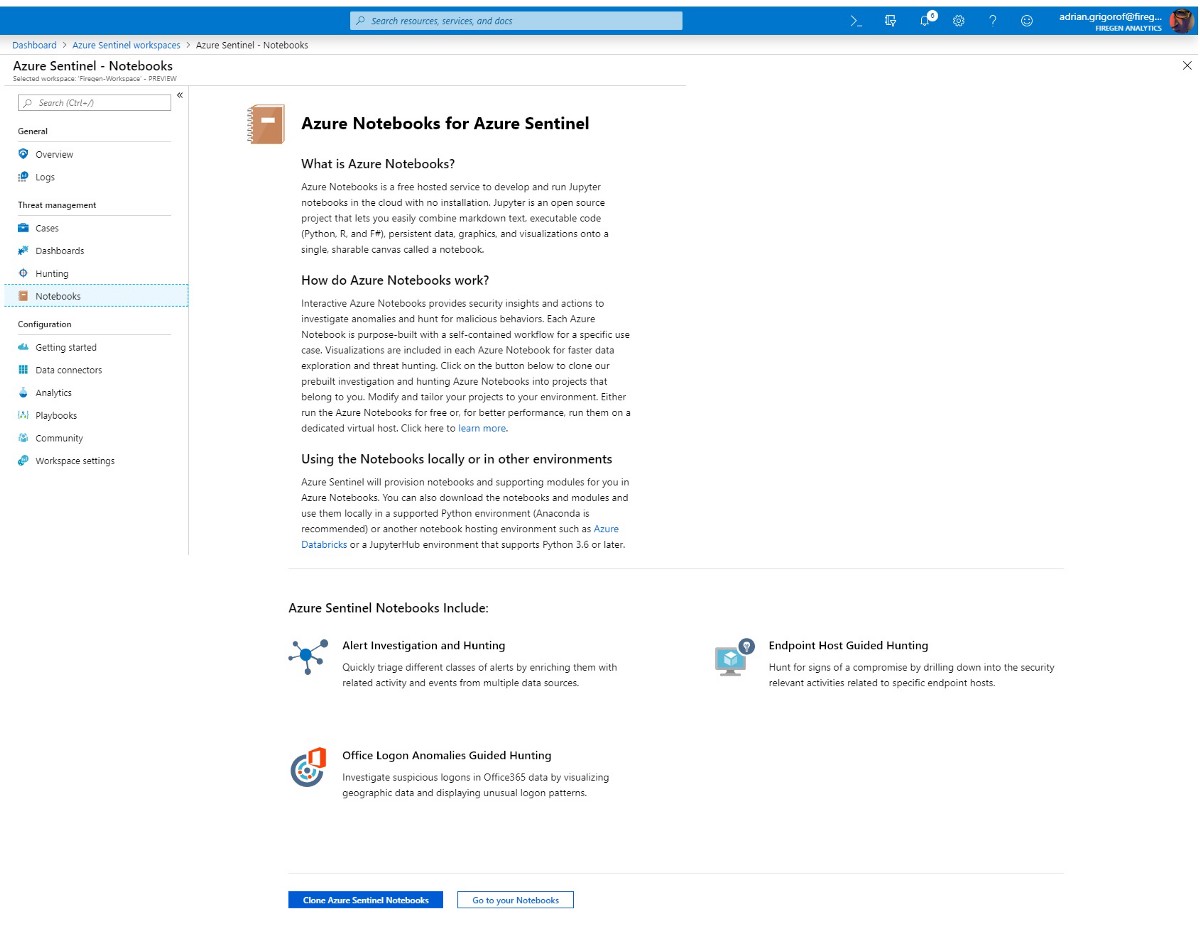

In Azure Sentinel, the notebooks are accessible as one of the features. Clicking on Notebooks, a description page is opened with links to Azure Notebooks blade:

The user has the option to go straight to Azure Notebooks or clone the existing Azure Sentinel notebooks content before that. The existing notebooks are great material to understand how to best use the notebooks to extract and process data from the Sentinel logs, no need to reinvent the wheel.

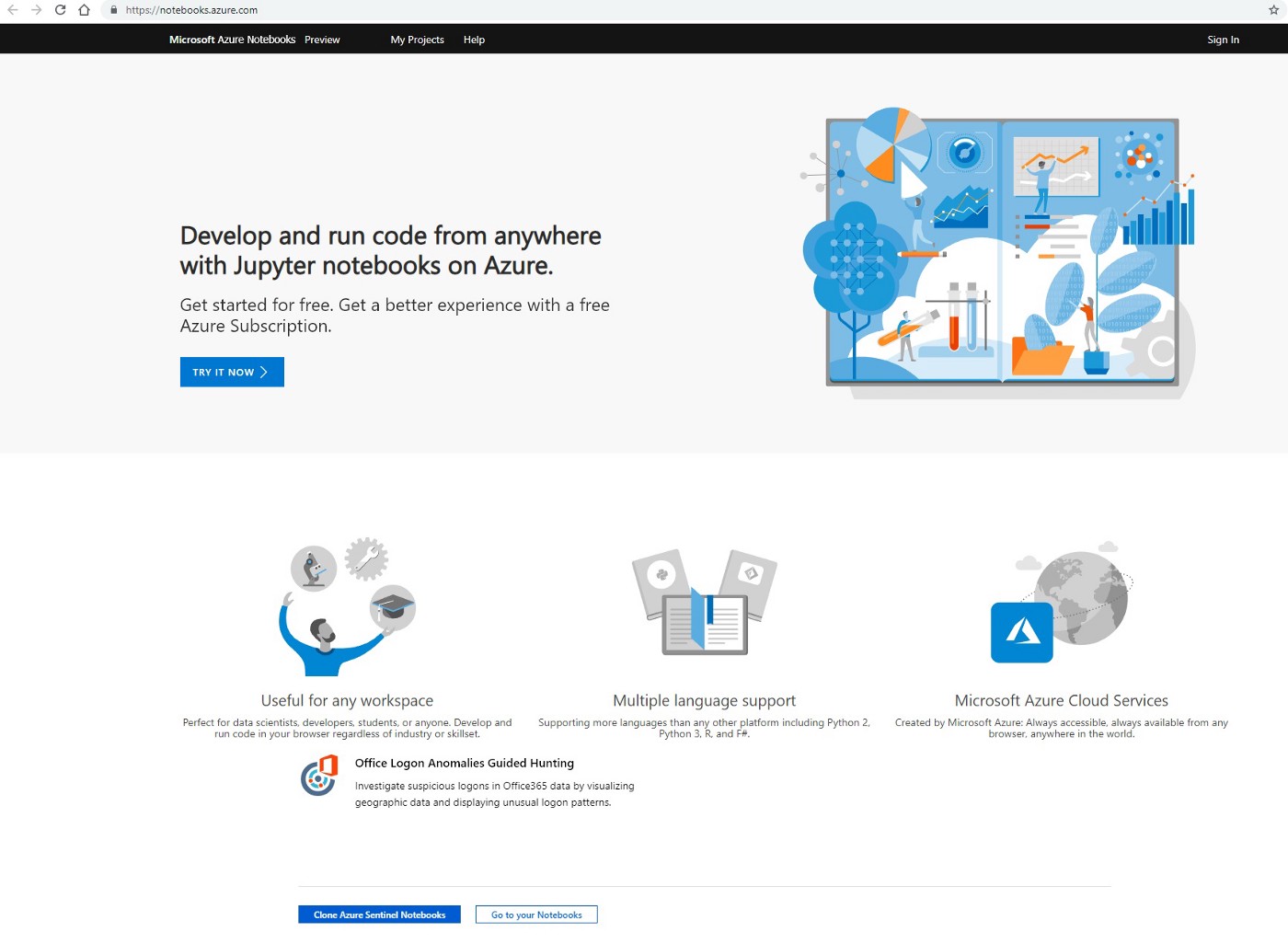

The Azure Notebooks service is offered free of charge at the time of this article and they can be used for other applications, not just Sentinel. An account is required in order to access this service.

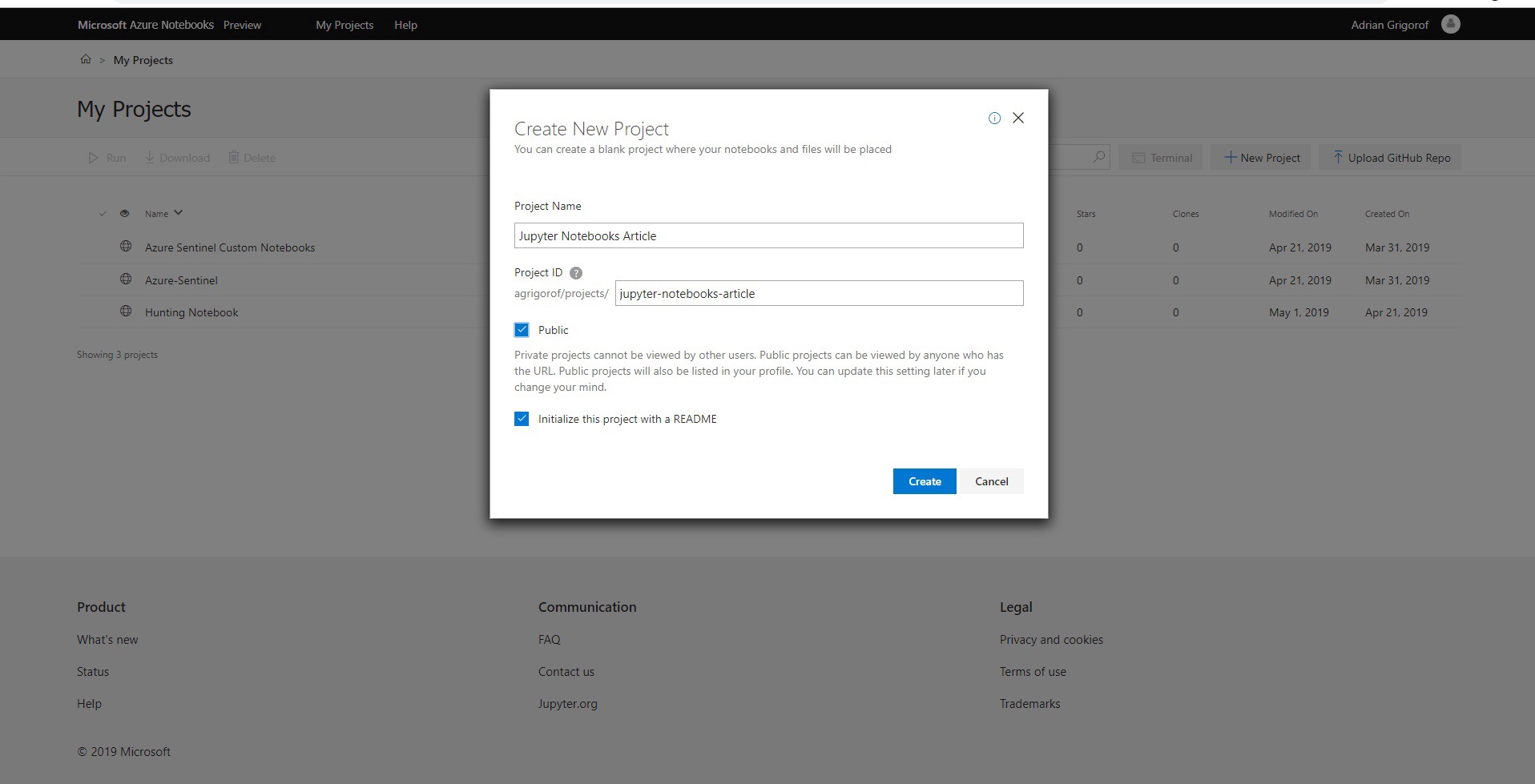

The first interface is the list of projects. A project is used to organize various resources under one folder. For this article, I will create a project called “Jupyter Notebooks Article” and I will make it public (the link will be listed again at the end of the article).

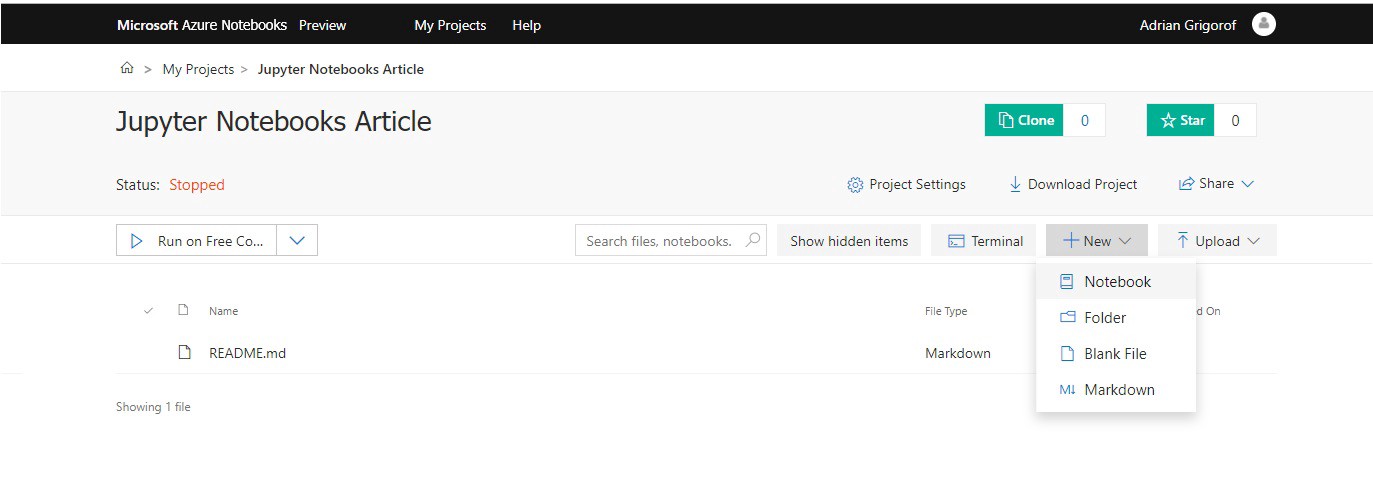

The newly created project will only have one file, README.md that I can use to add some information about this project but for now I will leave it with the default content. Be cautious about the “Public” checkbox, don’t leave it on if you have sensitive data in it.

From the menu, I will click on New in order to create new notebook:

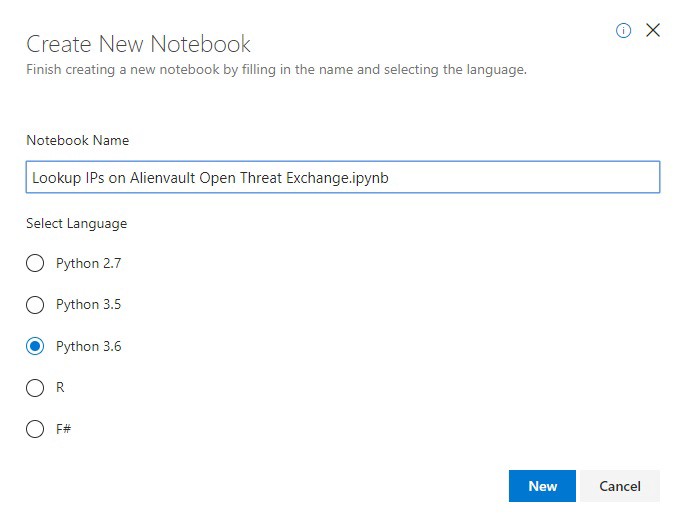

The new notebook creation screen will ask for a name and the programming language that will be used. I my case I will set the name as “Lookup IPs on Alienvault Open Threat Exchange” and select Python 3.6:

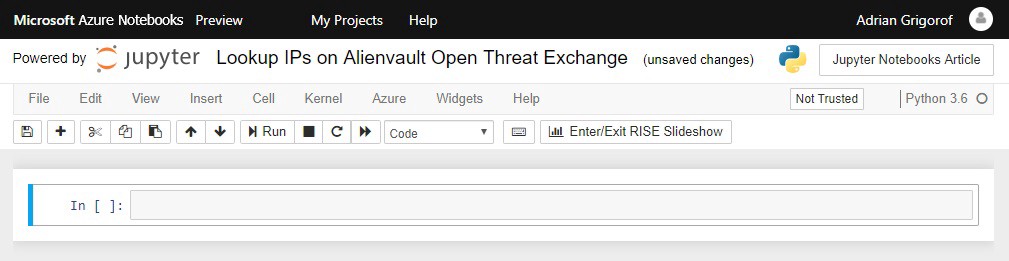

A new notebook is created (file name Lookup IPs on Alienvault Open Threat Exchange.ipynb) and we can start using it.

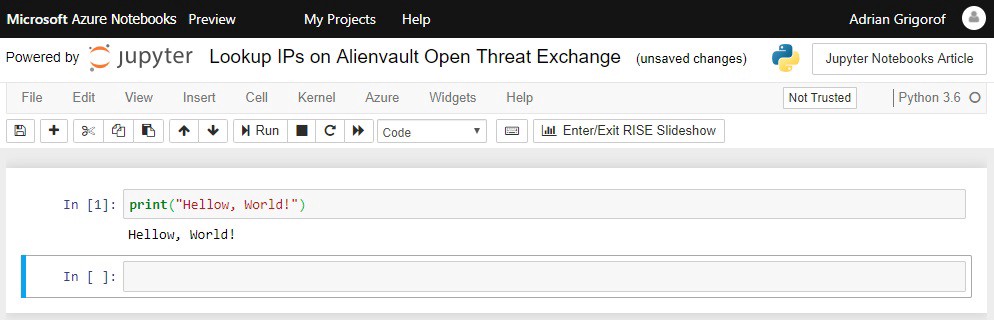

At this point, we can enter any Python command in the first cell and execute it by clicking on the Run button or by pressing the Ctrl-Shift shortcut. For example, I can execute the command print(“Hello, World!”) to display the traditional message:

As one can see, the output of the command is printed outside the cell. Because I used Ctrl-Shift to run it, it took me to the next cell. I can add as many commands as I want in just one cell but if I want to execute them gradually (visualizing the results and troubleshooting if needed) I need to put the commands in different cells. I can always go back to a cell and change its content (and I will do just that in my notebook).

As I mentioned before, in order to extract data from Azure Sentinel and perform various security-related tasks to process and enrich the data, we need to import a number of libraries into our notebook. The notebook at this time only knows the standard Python commands.

Microsoft provides a sample notebook that contains the commands to import these libraries (to configure the “environment” needed by Azure Sentinel). In the notebook comments they provide additional methods to import them, depending on how the notebook is used.

https://github.com/Azure/Azure-Sentinel/blob/master/Notebooks/ConfiguringNotebookEnvironment.ipynb

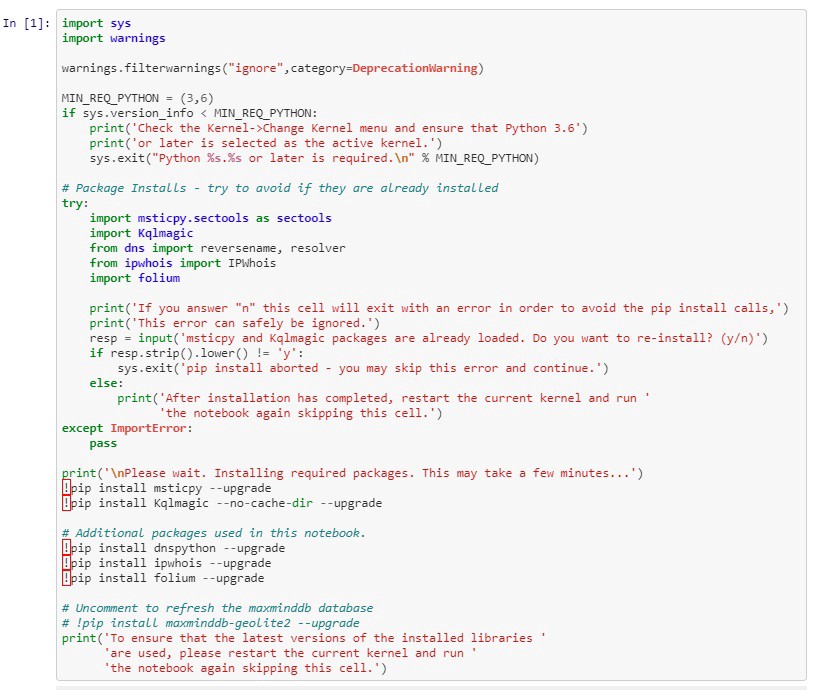

In my notebook, I will paste and run the code copied from the initialization cell from the notebook provided by Microsoft:

This code installs several components used to analyze Azure Sentinel data:

msticpy.sectools — Microsoft Threat Intelligence Python Security Tools

Kqlmagic — Enables notebook queries with Kusto Query Language

DNS functions (reversename, resolver)

Whois functions (IPWhois)

folium — Data visualization

In my example I will only use the first two but the others can be seen in action in the sample notebooks from Microsoft.

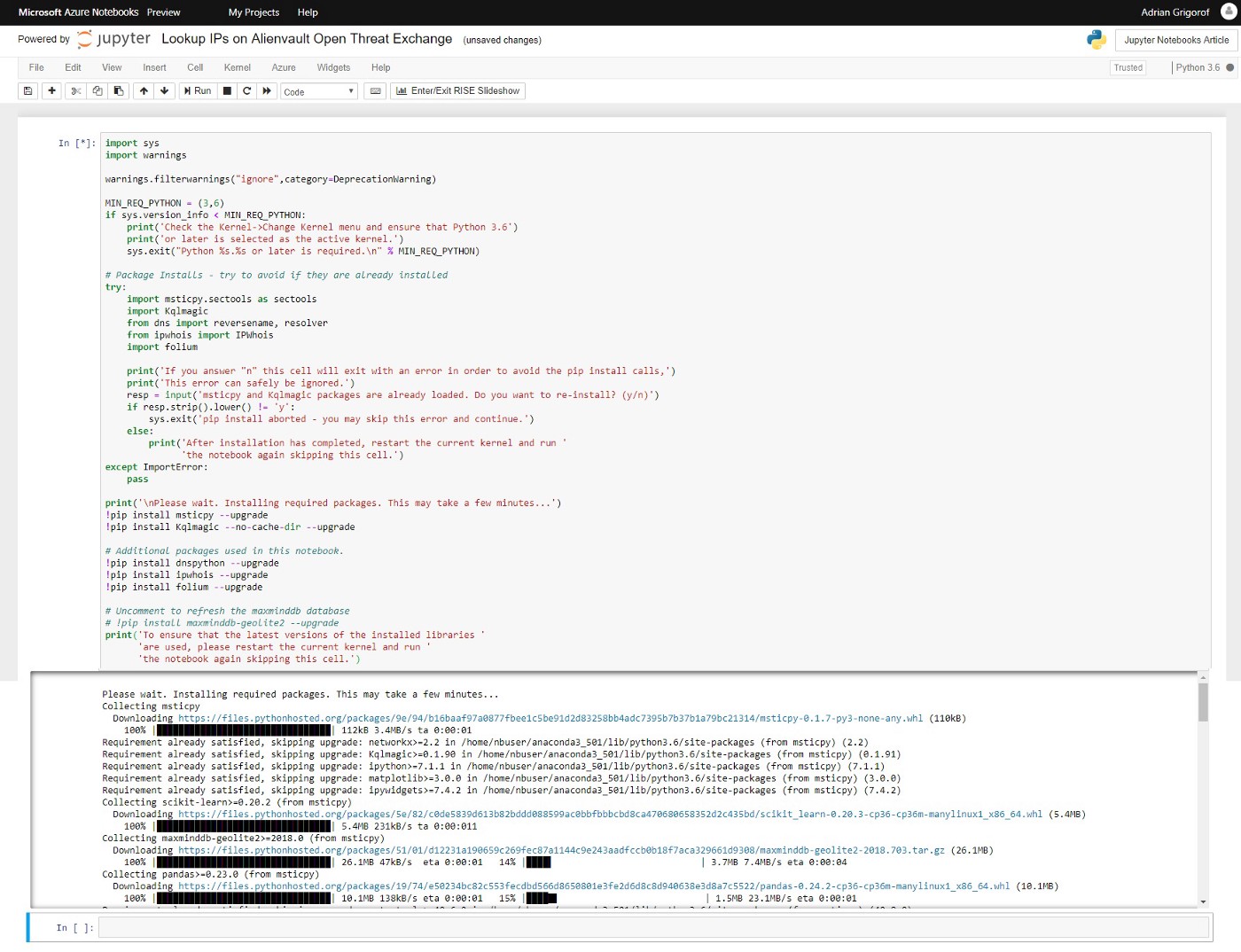

Once the code will start running, one should see the various components being downloaded and installed:

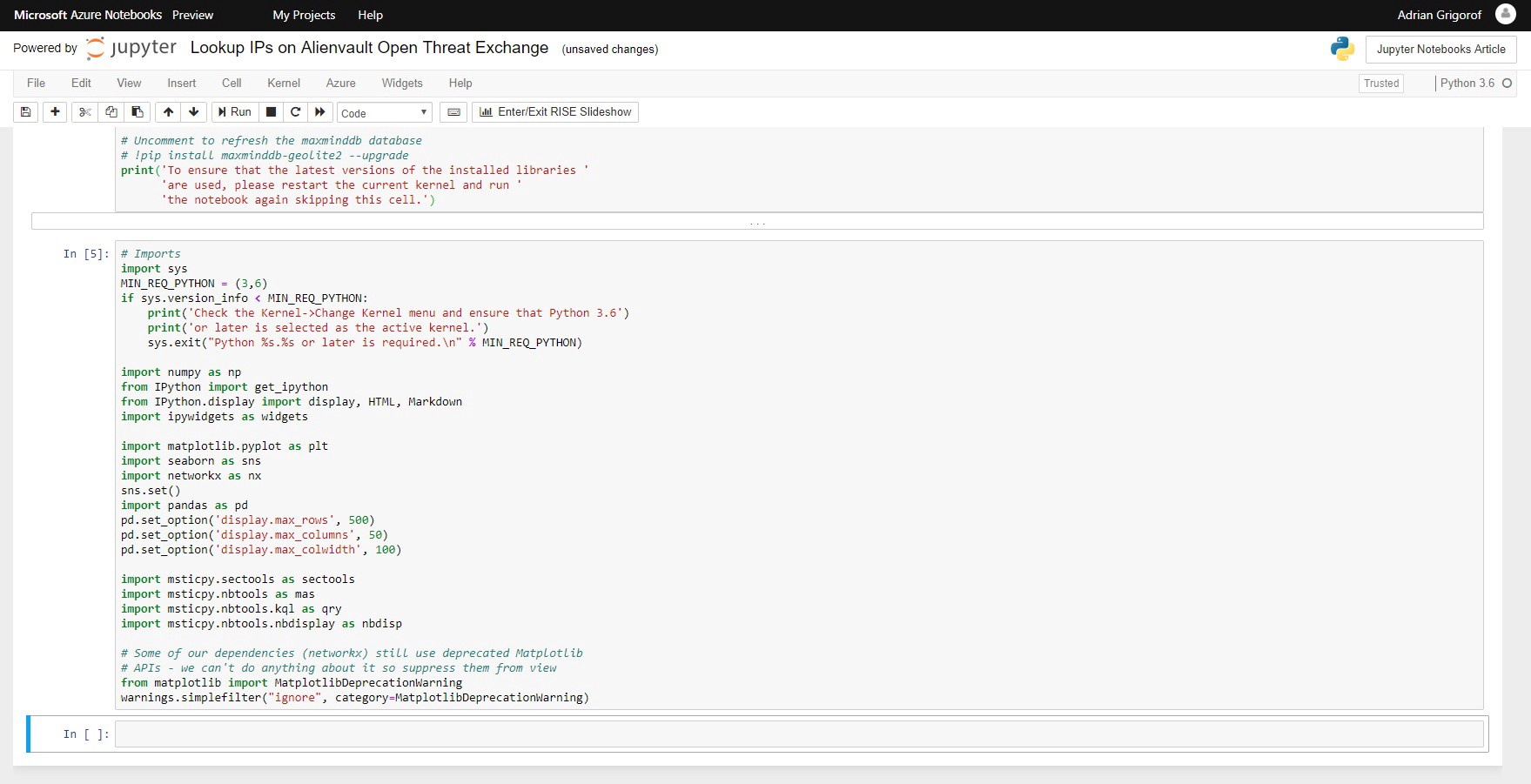

Once the download and installation if finished, we are ready to use all the tools available in those libraries. In the next cell we have the code to load the libraries that we will use in this example:

The task that I want to achieve in this notebook is to extract from one Palo Alto and one pfSense firewalls logs a list of IP addresses to which my internal hosts connected and verify them against the Alienvault OTX Threat Exchange platform to see if any of the destination IPs are listed as potential malicious ones. For example, if my internal host 192.168.5.25 connected to 109.104.79.48, I want to know if 109.104.79.48 was tagged in any Alienvault OTX pulses. This may be an indicator of malicious activity on my server, maybe a cryptominer is installed or some other malware. This type of lookup would be difficult to perform with a regular Kusto query within Azure Sentinel.

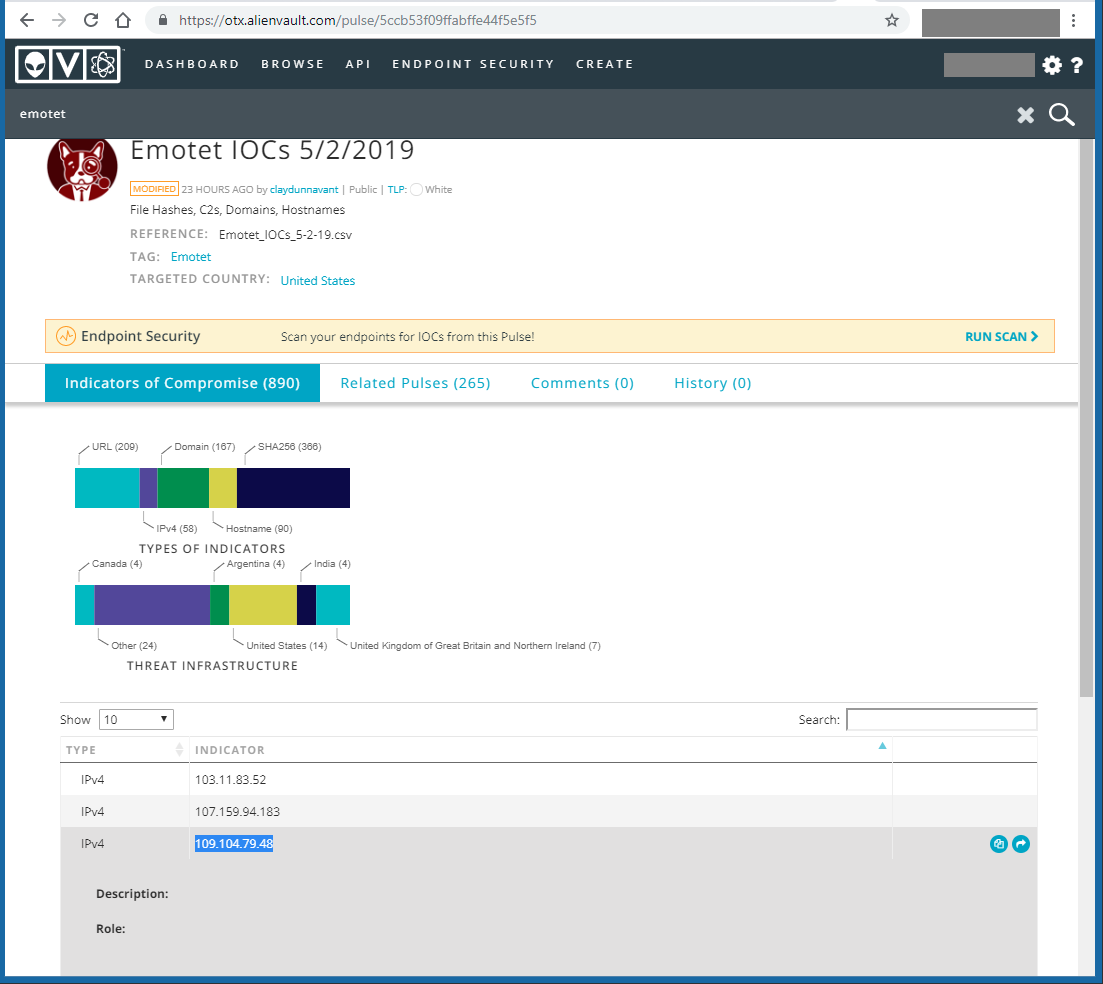

109.104.79.48 is not a random IP address. To make it more realistic and get at least one match on Alienvault, I searched indicators of compromise for the Emotet malware on Alienvault and found several, 109.104.79.48 included:

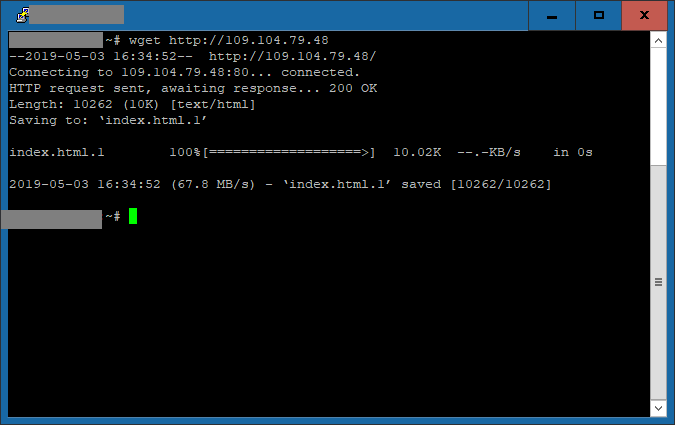

From one of the internal hosts (a Linux machine), I executed a wget command to access that IP over http:

This will ensure that I have some sample data that should match an AlienVault OTX pulse. By the way, for those that are not familiar with Alienvault OTX, here is a quote from Wikipedia:

“ Open Threat Exchange is the world’s largest crowd-sourced computer-security platform with more than 80,000 participants in 140 countries who share more than 19 million potential threats daily. It’s free to use.”

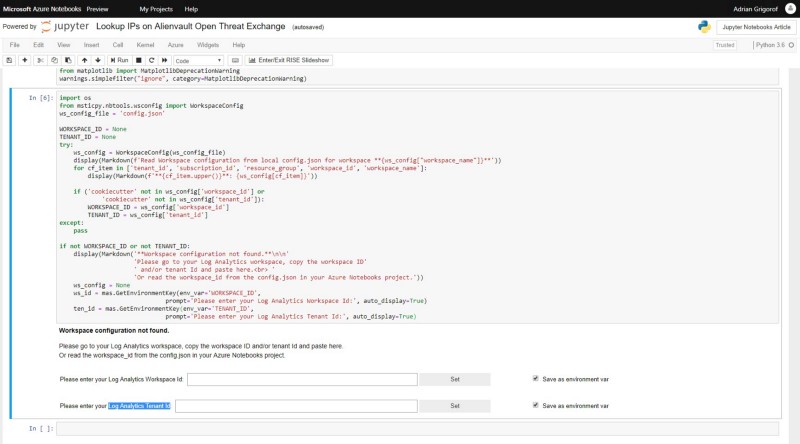

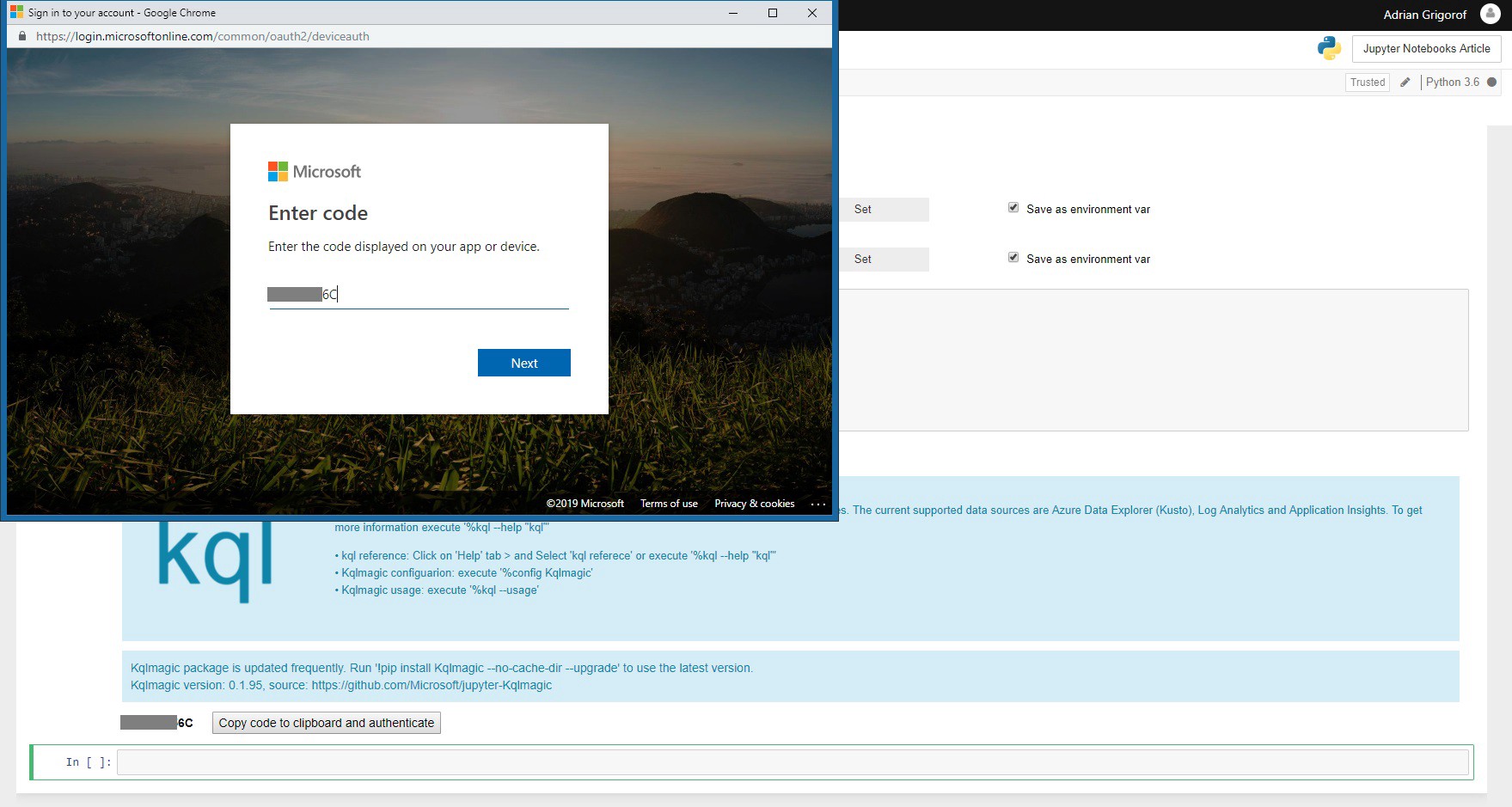

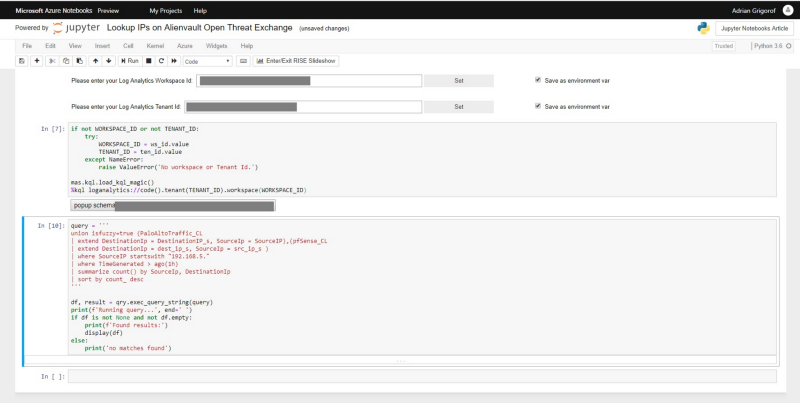

The first task would be to connect to Azure Sentinel and extract the list of IPs that my servers connected to for a specific time interval. Since the notebook is not something that is automatically allowed to query my logs, I have to authenticate my connection. The next cells allow the user to interactively enter the Azure Sentinel details (Workspace Id and Log Analytics Tenant Id):

These can be set as environment variables so the user will not be asked every time about them. The code in this cell and he one after is from the sample Microsoft Notebooks, one can always use them as working examples.

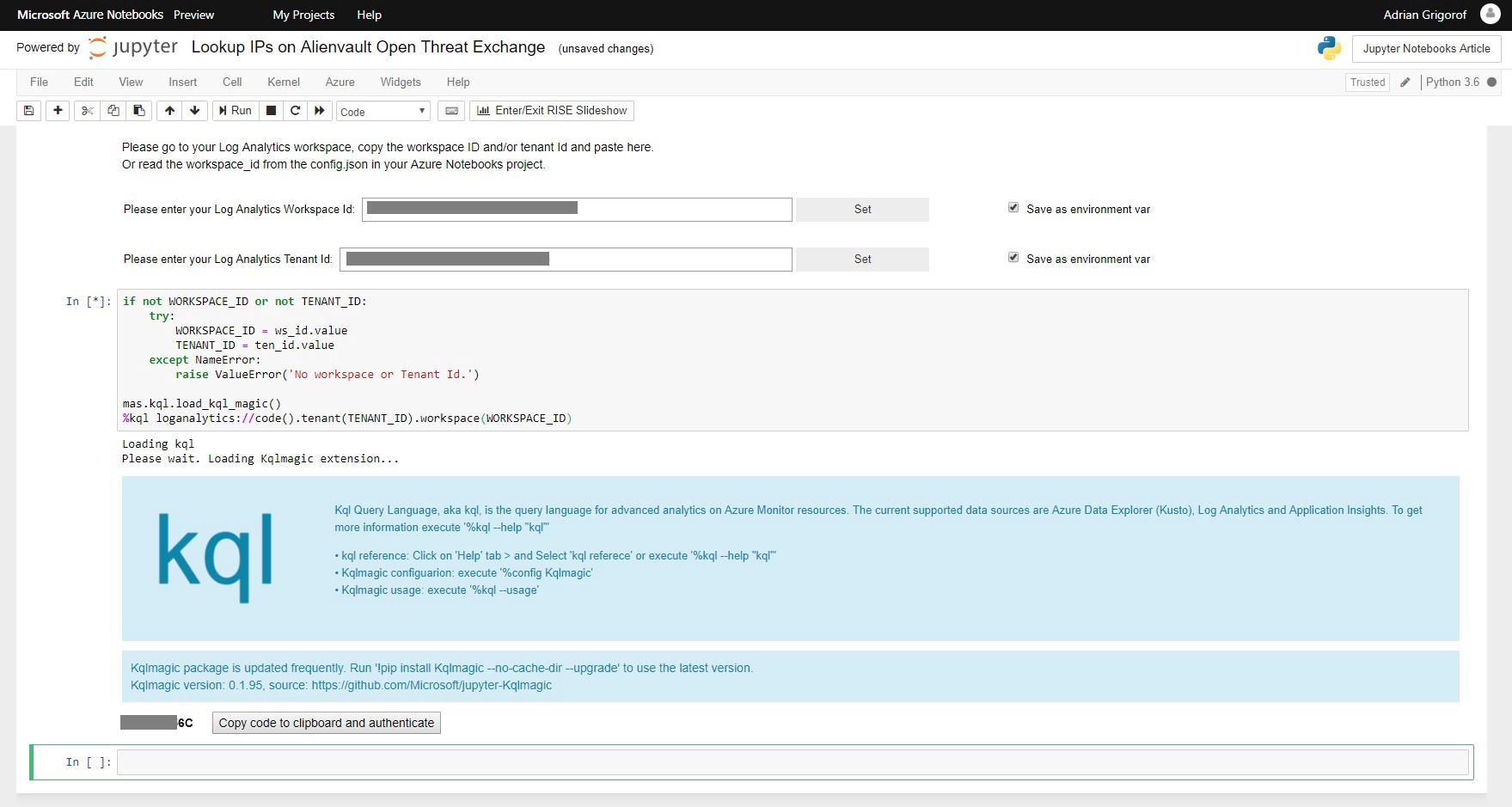

The next cell will perform the authentication and connect Kqlmagic to the Azure Sentinel backend. Again, the authentication is interactive:

Once authenticated, we are ready to start working retrieving and processing the data.

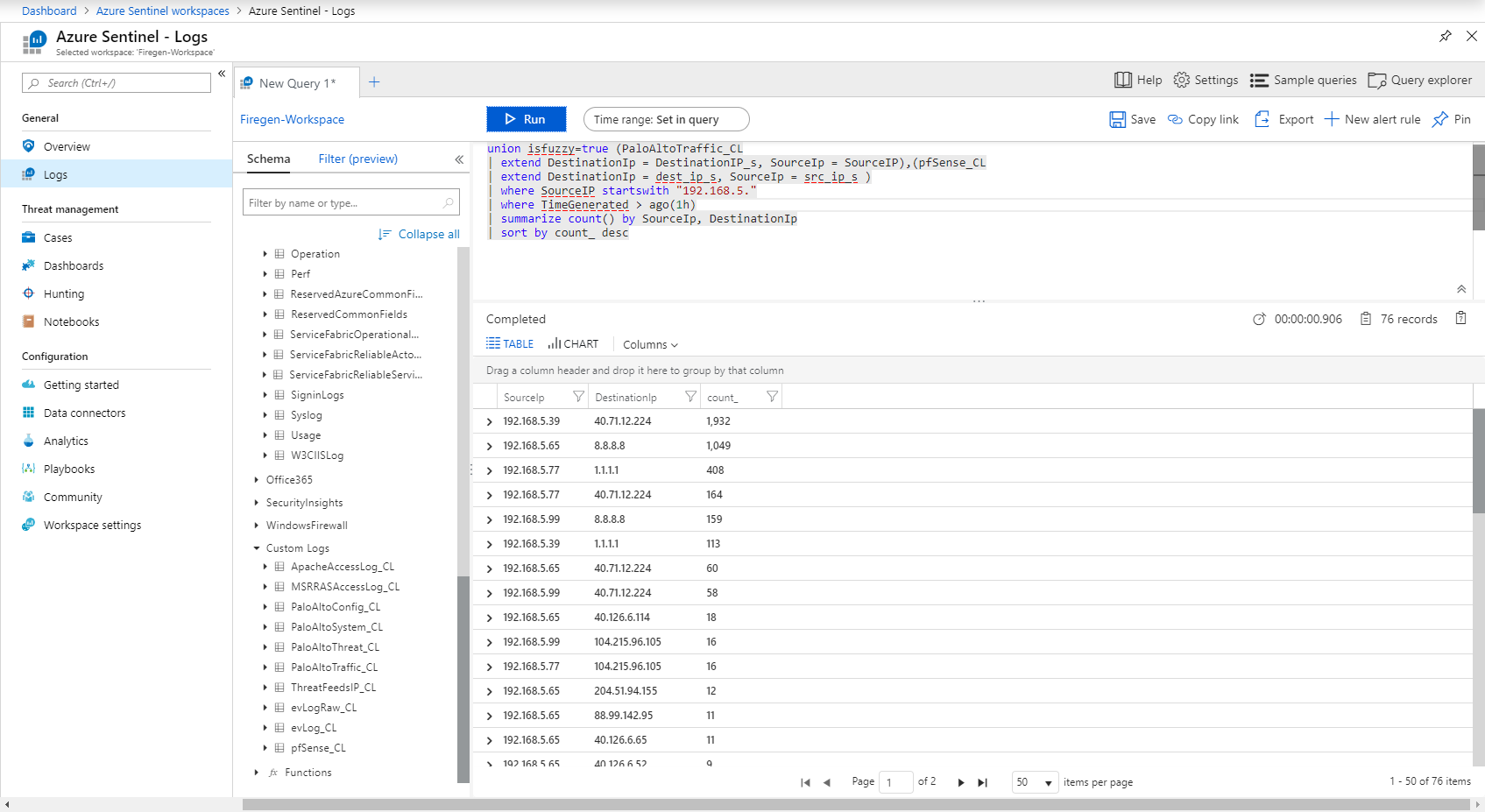

First step is to set a time interval and retrieve the records matching our criteria using a Kusto query. In Azure Sentinel Logs blade I can work in building up the right query syntax. For example, I want the destination IP addresses for my internal hosts for the last 1 hour. In Azure Sentinel Logs I can get this information with the following query:

We can see that the query returned 76 records, indicating the internal hosts, the external ones and how many connections, sorted by count.

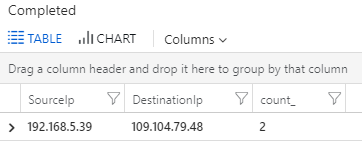

Just to be sure that the “bad” IP is there, I searched just for it and I can see it recorded:

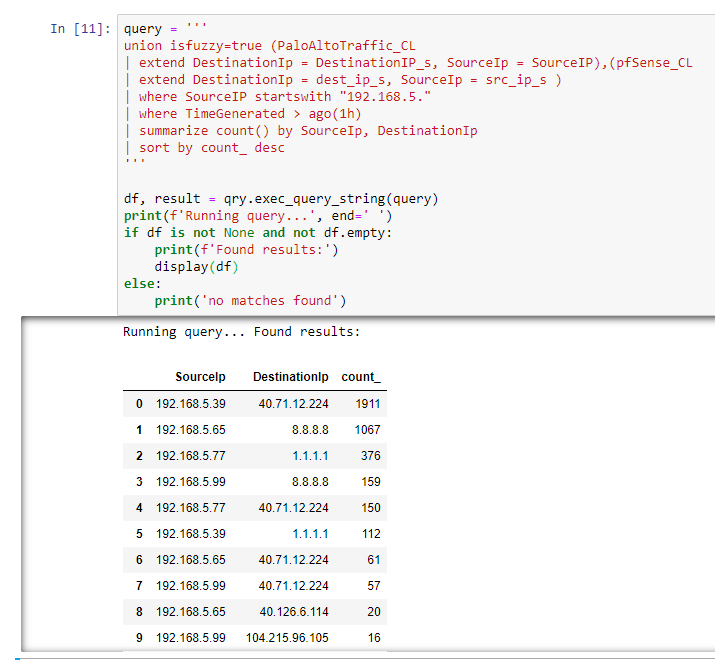

Next, to run the same query in the notebook, we use the following commands:

I configured a variable called query that stores (between triple quotes) the same query that I used in Azure Sentinel to extract the data).

Next, I use the exec_query_string(query) command (part of the msticpy.nbtools.kql library) to run the query against the Azure Sentinel backend and store the returned data in a df variable (a data frame — in layman terms one can consider it a table). I also use a display command to print the content of the df variable (if the query was successful), otherwise just print the fact that there were no records returned. Sure enough, if I run this command I get the expected results:

If some IPs and numbers look a bit different from the Azure Sentinel query is because the search is looking for the connections in the last hours and they may change as I run the notebook query a few minutes later compared with the original query.

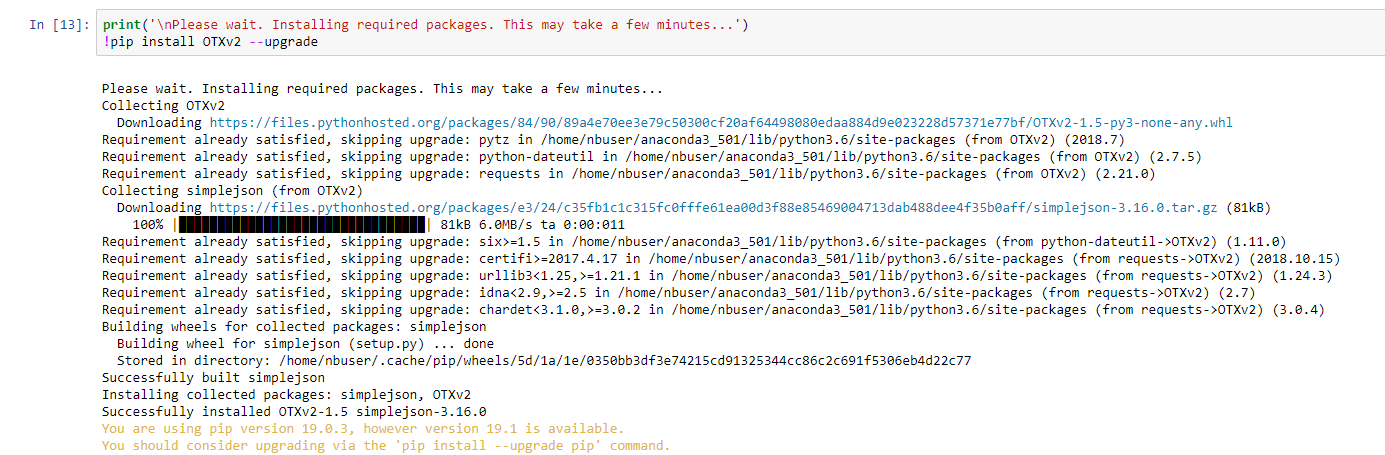

Now, I need to take each of the returned IPs and check it against Alienvault OTX. For this I can use the OTX API available for Python (how I know this? I googled it!). Similar with the other libraries, the OTX one has to be first downloaded and installed within the notebook:

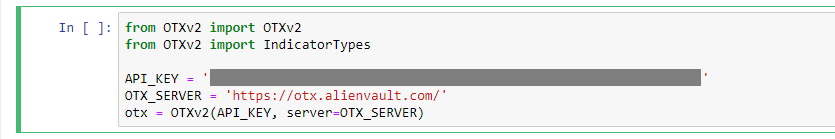

Once done, to import all the needed function I used the following code:

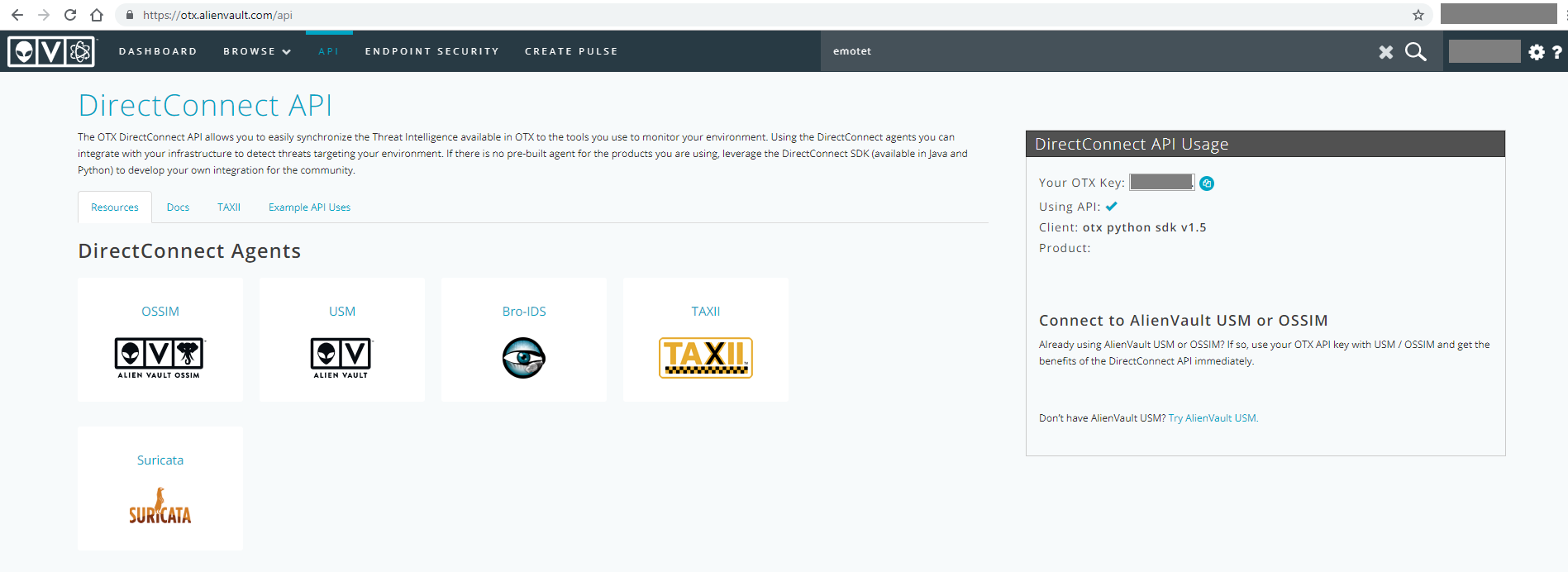

You will notice that an API_KEY is required. A free key is available from Alienvault OTX for all users that created an account (free as well):

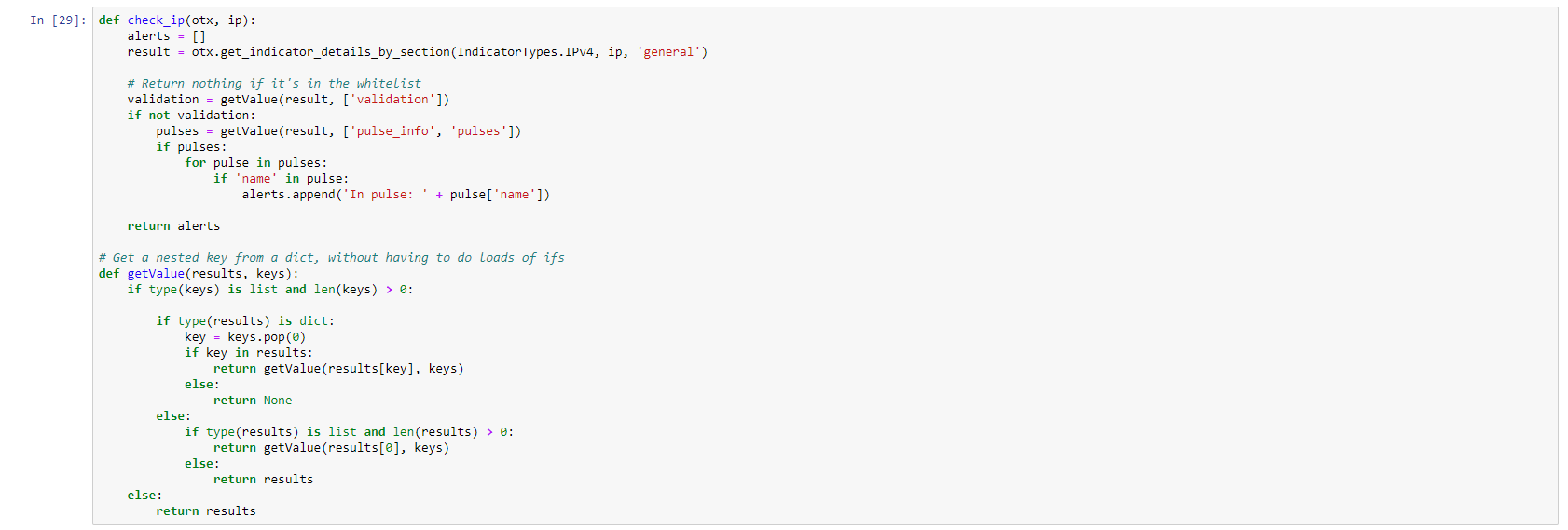

Running the cell should not generate any message as we are just importing the OTX functions. Next, I define a “check_ip” function that I can use to verify an IP against Alienvault OTX pulses. Pulses are data structures in OTX that can contain IoCs such as IP addresses, domain names, hashes, etc.

The code is using functions from the OTX library. I also define a function called getvalue that simplifies the extract of the data that is returned from OTX: I found these functions again through Google as a sample Python code for using OTX (so Google is your friend for these type of efforts).

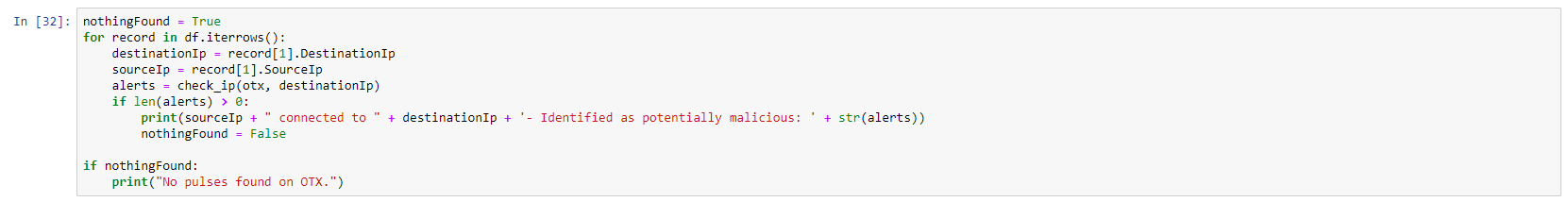

Next, I iterate through all the records (rows) in the df dataframe and I verify each destination IP against the OTX database. If the result is positive, I print source host (the internal IP), the destination host and the information returned from OTX. I use a variable called nothingFound to display a “No pulses found on OTX.” if the data is “clean”:

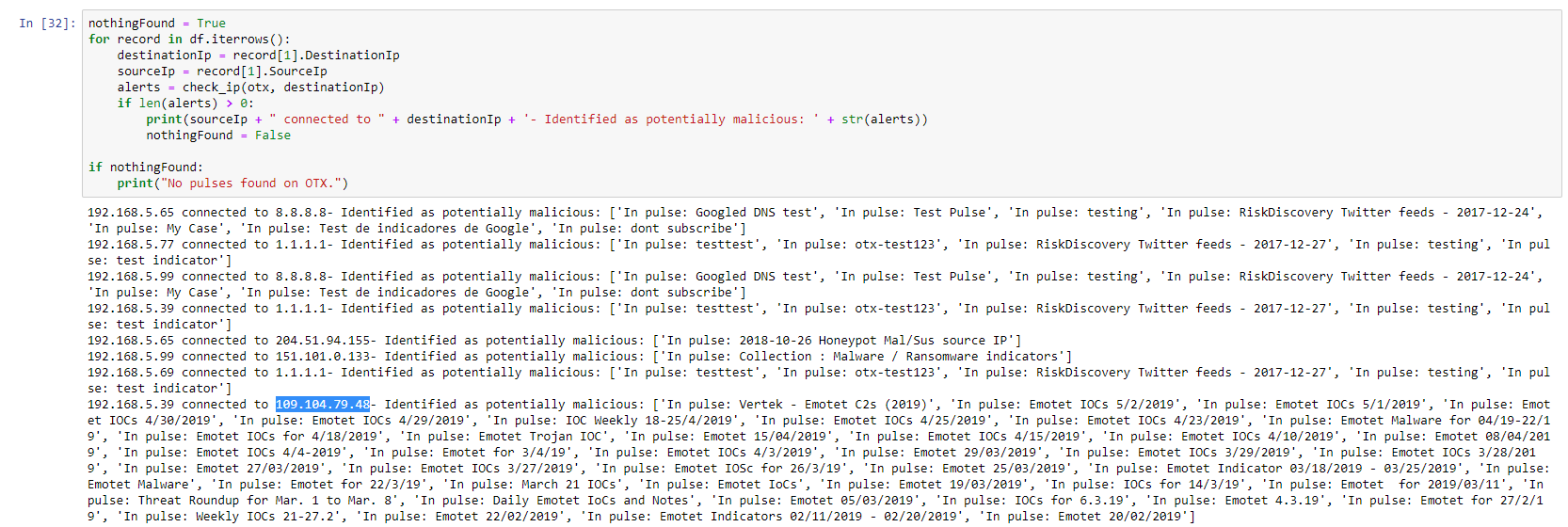

When we run the code in this cell, the notebook will check each IP through Alienvault OTX. Since this is not an instant process, the results will be displayed as each IP is verified. As long as the cell has a on its side, it means it is still running. In this case I got the following output:

As one can see, I got multiple hits. Some are matching connections to DNS servers (they are listed in a “DNS Test” pulse on OTX) but others match malicious IPs. The test one (109.104.79.48) is included, but I got some additional ones that I did not expect:

204.51.94.155 — Identified as potentially malicious:

151.101.0.133 — Identified as potentially malicious:

Searching OTX for the 204.51.94.155 indicates that indeed, it is part of that pulse, but the pulse is not containing malicious IPs but a list of known honeypots and benign scanners. This one in particular belongs to dshield.org and I know that one of my servers is retrieving a threat intel list from it.

151.101.0.133 appears to be used by some github projects and the pulse in OTX is described as “Collection : Malware / Ransomware indicators — a generic collection obtained from various sources, for a quick check on the IOC inventory for boosting defense.”. Considering that this pulse is from 2017 and doesn’t appear properly validated, I discount it as false positive, especially since I did access github to download code.

As a conclusion, one can see that by using a Jupyter Notebook allowed me to extract very specific data from Sentinel and use it to enrich it with content available through APIs from a threat intelligence sharing site and this is just a simple example. While Sentinel is benefiting the powerful capabilities of its native Kusto Query Language, the option of using Jupyter Notebooks adds capabilities that can greatly enhance the level of analysis available. The skill required to use it are not specific to Sentinel so all time invested in learning Python and Jupyter Notebook will be of benefit in many situations. I can share this notebook with anyone so they can reuse it with very little effort. In time, the Sentinel community should build a list of notebooks that can be fine tuned and improved on regular basis.

The source code used in this sample notebooks is available here: Jupyter Notebooks Article. Since my code is extracting data from custom logs, you would need to adjust the tables accordingly to match your environment.

Ian Hellen, Principal Software Engineer at Microsoft, has published a series of articles with extensive examples on using Azure Sentinel in combination with Jupyter Notebooks. His articles can be used as showcase of applications of various log sources, correlations, external lookups and visualizations:

Security Investigation with Azure Sentinel and Jupyter Notebooks — Part 1

Security Investigation with Azure Sentinel and Jupyter Notebooks — Part 2

Another great article on using Jupyter Notebooks from threat hunting comes from Maarten Goet (also from Microsoft) – Threat Hunting in the cloud with Azure Notebooks: supercharge your hunting skills using Jupyter and KQL.